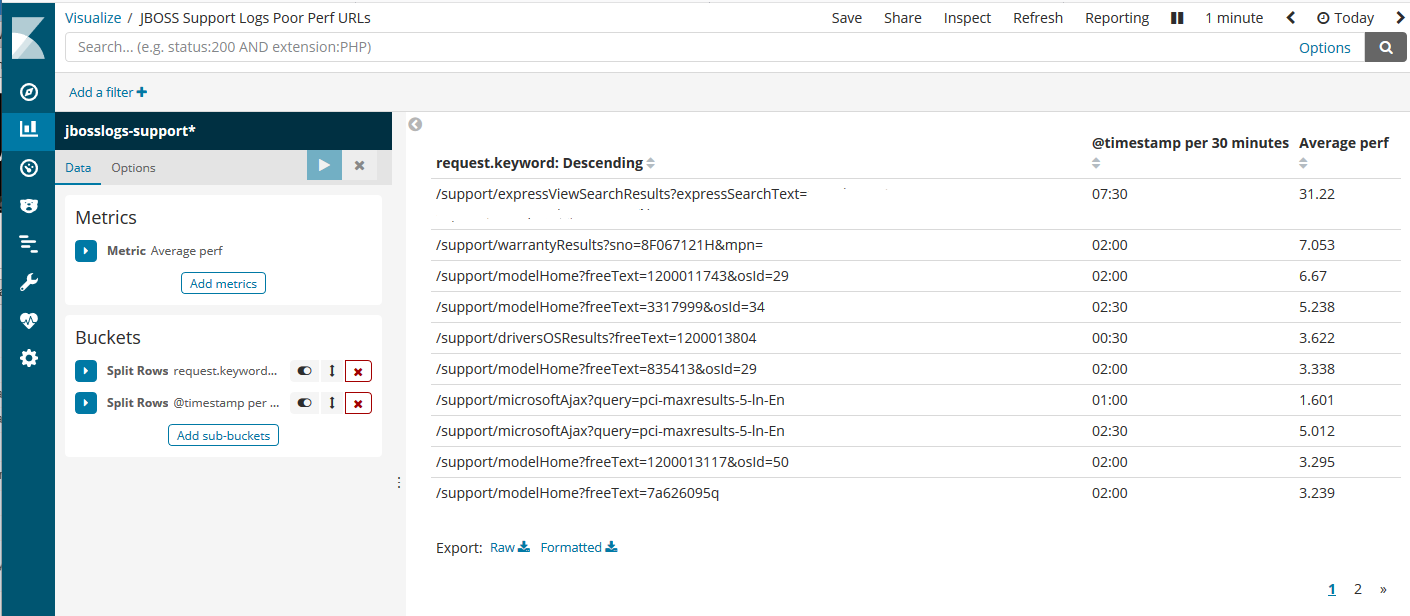

The Apache web server (HTTPD) logs are full of useful information. One method to data mine the Apache logs is using command line programs such as grep and AWK to do exact counts if certain occurrences (ie. 404 errors). A more recent tool that’s extremely useful is Elasticsearch. Using logstash, the logs are fed into, and indexed into Elasticsearch engine, so the data can be easily discovered/visualized via Kibana.

An interesting issue encountered in an Enterprise production environment is through the use of a load balancer (LB) and/or web application firewall (WAF). The resulting Apache logs are not reporting the correct client source IP addresses. The logs would show the load balancer IP address – which is not useful for customer profiling/marketing purposes.

192.168.0.2 – – [19/Nov/2018:00:00:12 -0800] “GET /images/support/frontend/toshiba-200.png HTTP/1.1” 200 5237 “https://support.toshiba.com/support/staticContentDetail” “Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/66.0.3359.117 Safari/537.36” “139.130.4.5, 192.168.0.1”

In this example, 192.168.0.1 is the IP address of the LB and 192.168.0.2 is for the WAF. The LB, in this case, can not automatically report to the WAF the actual client source IP, so it had to do it via X-Forwarded-For HTTP header. The way this LB is configured, last entry in each line of the log has the pair value (in quotes) of the source and LB IP addresses.

As shown it’s possible to parse through logs using Logstash and Filebeat. Similarly, it can be done for Apache web logs. In this case, however, some data clean-up is needed so the default Apache2 logstash grok filter can be used. AWK is the command line tool of choice. First breakdown the fields and merge quoted fields as one field:

function merge_fields(start, stop) {

#printf "Merge fields $%d to $%d\n", start, stop;

if (start >= stop)

return;

merged = "";

for (i = start; i <= stop; i++) {

if (merged)

merged = merged OFS $i;

else

merged = $i;

}

$start = merged;

offs = stop - start;

for (i = start + 1; i <= NF; i++) {

#printf "$%d = $%d\n", i, i+offs;

$i = $(i + offs);

}

NF -= offs;

}

# Merge quoted fields together.

{

start = stop = 0;

for (i = 1; i <= NF; i++) {

if (match($i, /^"/))

start = i;

if (match($i, /"$/))

stop = i;

if (start && stop && stop > start) {

merge_fields(start, stop);

# Start again from the beginning.

i = 0;

start = stop = 0;

}

}

}

Then just pick and choose what fields to display, in the order that’s grok-able by the logstash Apache2 plugin:

{

gsub("\"","",$11); split($11,ipaddr,"\,"); printf "%s", ipaddr[1];

for (i = 2; i <= 10; i++) {

printf " %s", $i;

}

printf "\n";

}

EDIT: Note the output of printf needs to be clean, with no extra spaces. Otherwise, logstash’s grokfilter will not be able to parse properly!

The “gsub” AWK function is a global search and replace, used to remove the quotes out of the 11th field that contains a quoted pair of IP addresses. Using the “split” function, the comma separated values can now be assigned into an array “ipaddr”. As the source client IP address, take the first value in the array.

Finally, just feed the entire access_log file into this AWK script as a pipeline, then send the output into a local file for filebeat to pick up, or remotely to logstash.

Important Note: This AWK script will not detect any inconsistent pattern other than what’s already assumed above. For example, if the X-Forwarded-For IP values are not provided, then nothing will be generated in the source IP output. Thus, producing a grok parse failure in Logstash. To avoid this, use “grep -v” to exclude any of those anomalies – as well as other keywords that need not be tracked, such as LB health checks, etc.